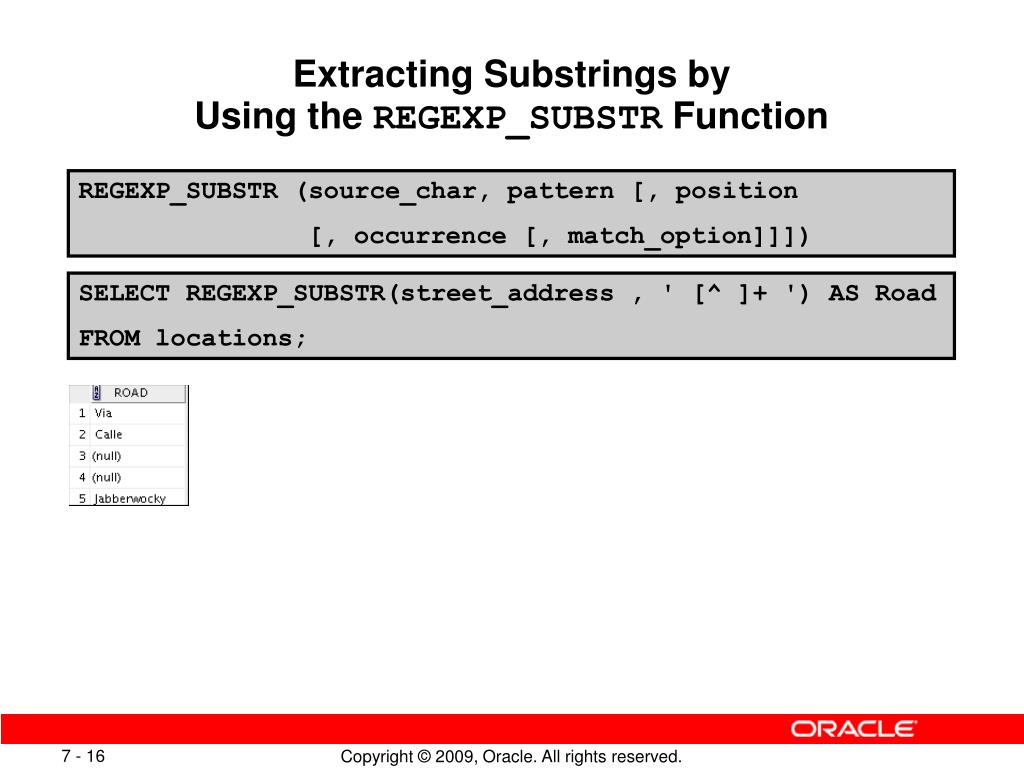

You can execute and grow Analytics on all of your data in seconds without having to manage your Data Warehouse infrastructure. With tens of thousands of users utilizing it to analyze exabytes of data and run complex Analytical queries, Amazon Redshift is the most widely used Cloud Data Warehouse. It’s built on ParAccel’s (later Actian’s) massive parallel processing (MPP) Data Warehouse technology, which can handle large data volumes and Database Migrations. Introduction to Amazon Redshift Image SourceĪmazon Redshift is a Data Warehouse product that is part of the broader Cloud Computing platform Amazon Web Services, with the color red being a reference to Oracle, whose corporate color is red and is referred to as Big Red informally. Let’s start with a brief introduction to Amazon Redshift, then we will dive into Amazon Redshift String Functions. In this article, You will learn about the Amazon Redshift String Functions and how to use these different Amazon Redshift String Functions with their syntax and description, to make it easy for you to understand different Amazon Redshift String Functions. Understanding Amazon Redshift String Functions.Simplify Data Analysis with Hevo’s No-code Data Pipeline.It's a best practice to query the column log records directly. Note: There's a limitation that's related to the multi-row queries in user activity logs. The returned files match the useractivitylog entries. Replace(regexp_substr(logrecord, ']*'),']','') AS query Replace(regexp_substr(logrecord, 'xid=*'),'xid=','') AS xid, Replace(regexp_substr(logrecord, 'userid=*'),'userid=','') AS userid, Replace(regexp_substr(logrecord, 'pid=*'),'pid=','') AS pid,

Replace(regexp_substr(logrecord,'user=*'),'user=','') AS user, In the following example, the hidden $path column and regex function restrict the files that are returned for v_connections_log: CREATE or REPLACE VIEW audit_logs_views.v_useractivitylog AS The returned files are restricted by the hidden $path column to match the connectionlog entries. To access the external tables, create views in a database with the WITH NO SCHEMA BINDING option: CREATE VIEW audit_logs_views.v_connections_log AS Create a local schema to view the audit logs: create schema audit_logs_viewsĤ. LOCATION 's3://bucket_name/logs/AWSLogs/your_account_id/redshift/region’ ģ. LOCATION 's3://bucket_name/logs/AWSLogs/your_account_id/redshift/region' Ĭreate a user log table: create external table s_audit_log.user_log( Iamauthguid varchar(50), application_name varchar(300)) Sslcompression varchar(70), sslexpansion varchar(70), Username varchar(60), authmethod varchar(60), Remotehost varchar(60), remoteport varchar(60), 's3://bucket_name/logs/AWSLogs/your_account_id/redshift/region'Ĭreate a connection log table: CREATE EXTERNAL TABLE s_audit_nnections_log(Įvent varchar(60), recordtime varchar(60), Note: In the following examples, replace bucket_name, your_account_id, and region with your bucket name, account ID, and AWS Region.Ĭreate a user activity logs table: create external table s_audit_er_activity_log( For role_name, specify the IAM role attached to your Amazon Redshift cluster.

Replace your_account_number to match your real account number. Iam_role 'arn:aws:iam::your_account_number:role/role_name' create external database if not exists Create an external schema: create external schema s_audit_logs To query your audit logs in Redshift Spectrum, follow these steps:ġ. Then, use the hidden $path column and regex function to create views that generate the rows for your analysis. To query your audit logs in Redshift Spectrum, create external tables, and then configure them to point to a common folder (used by your files). Associate the IAM role to your Amazon Redshift cluster. Create an AWS Identity and Access Management (IAM) role.ģ. Note: It might take some time for your audit logs to appear in your Amazon Simple Storage Service (Amazon S3) bucket.Ģ. Before you use Redshift Spectrum, complete the following tasks:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed